Harness is all you need: launching thClaws

Harness is all you need

When we reverse-engineered Claude Code earlier this year — the sourcemap that Anthropic accidentally pushed to npm in March — we expected to find a marvel of AI engineering. A clever loop. A novel orchestration. Some secret sauce that explained why the tool felt like a colleague instead of a chatbot.

What we found instead:

1.6% of the codebase was AI decision logic. The other ~98.4% was deterministic harness — permission gates, context compaction, tool routing, sub-agent dispatch, retry policy, prompt-cache markers, sandboxes.

The model wasn’t the product. The harness was.

That insight became the founding decision behind thClaws — and three weeks later, we’re shipping v0.3.1 to the world today. Three weeks. Not because we’re geniuses, but because most of an agent isn’t AI — once we accepted that, the work stopped being research and started being plumbing.

“Harness is all you need”

The 2017 paper that changed AI was titled “Attention is all you need.” Eight years later the lesson has rotated by one position. Attention is still all you need inside the model. But once the model starts doing things in the world — reading files, running shell commands, calling external services — attention is no longer the bottleneck. Scaffolding is.

What separates an agent that ships pull requests from a chatbot that talks about pull requests? Not the LLM. They’re often the very same model. What changes is everything around it: the loop, the tool definitions, the permission system, the way history is summarized when context fills, the way file edits are sandboxed, the way sub-agents are spawned and recovered when they wedge.

This is the harness. As Mike Krieger (CPO, Anthropic) put it at the Cisco AI Summit in February:

“Claude is now writing Claude. For most products at Anthropic it’s effectively 100% just Claude writing — and what we’ve done is created all the right scaffolds around it to let us trust it.”

The future of software isn’t “the LLM will write your app.” It’s “the LLM will be your app’s scheduler” — dispatching tools, skills, and external services like a kernel scheduling processes. Anthropic calls it agentic application. Andrej Karpathy calls it Software 3.0. Whatever the name, this is the layer eating vertical SaaS, and it runs on harness, not on attention.

We wanted to build at that layer. So we did.

Why a clean-room port

Claude Code is, by all accounts, the best harness shipped to date. But it has two limitations we couldn’t accept:

- It’s locked to Anthropic. No Ollama on a flight. No GPT for one-shots. No Gemini for long context. No DashScope for the Thai market. No multi-provider strategy when you care about cost, latency, or sovereignty.

- It’s a CLI, period. Wonderful for engineers — invisible to the lawyer drafting contracts, the accountant summarizing financials, the PM who’s never opened a terminal.

We loved the design. We didn’t want to fork it. We wanted to take what Claude Code taught us — the loop pattern, the sub-agent recursion guardrails, the skill system, the MCP integration — and build a different shape: native, local, multi-provider, multi-surface, sovereign by design.

So we did a clean-room port to Rust. Not by reading Anthropic’s source — by reading the behavior, then re-implementing the harness pattern from first principles. Rust because we wanted a single 15 MB binary that boots in under 200 ms, runs on six platforms, and never asks you to install Node first. Rust because the seam between user-approved shell commands and arbitrary MCP-server output is exactly the kind of place where memory safety matters.

We also took inspiration from neighbors in the space:

- Claude Code (Anthropic) — the loop, the slash-command UX, the sub-agent recursion guardrails.

- Open Claw (community) — a clear signal that the community is hungry for an agent orchestrator, not just a single agent.

- Claude Cowork — its philosophy of running on your own machine and managing your own files. The seed of our Files tab — a viewer + editor that Cowork itself doesn’t ship.

Together, they sketched the silhouette. We filled it in.

What thClaws is

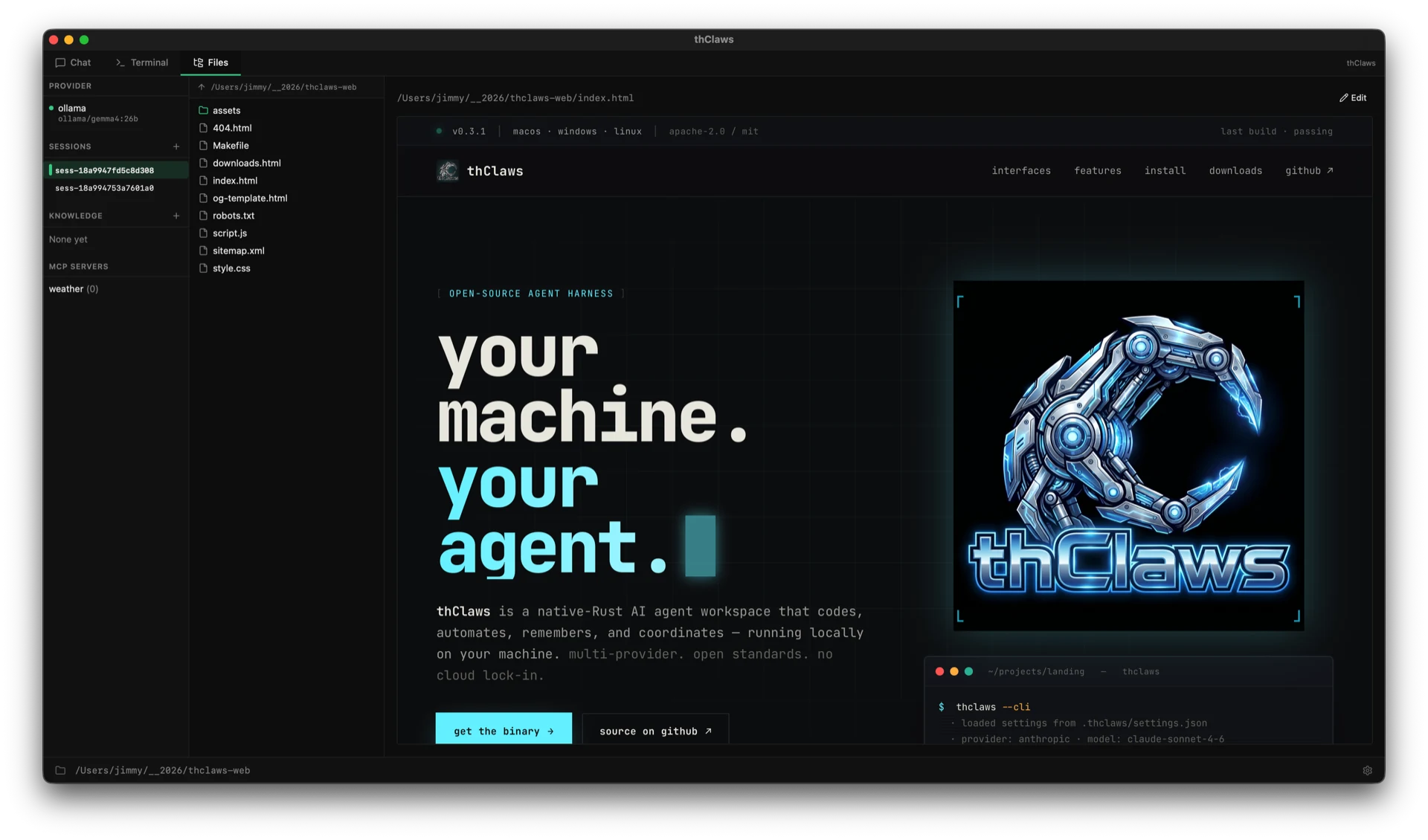

thClaws is a native-Rust AI agent workspace that runs on your machine. One binary, three primary surfaces, plus a fourth for orchestration — every surface backed by the same engine:

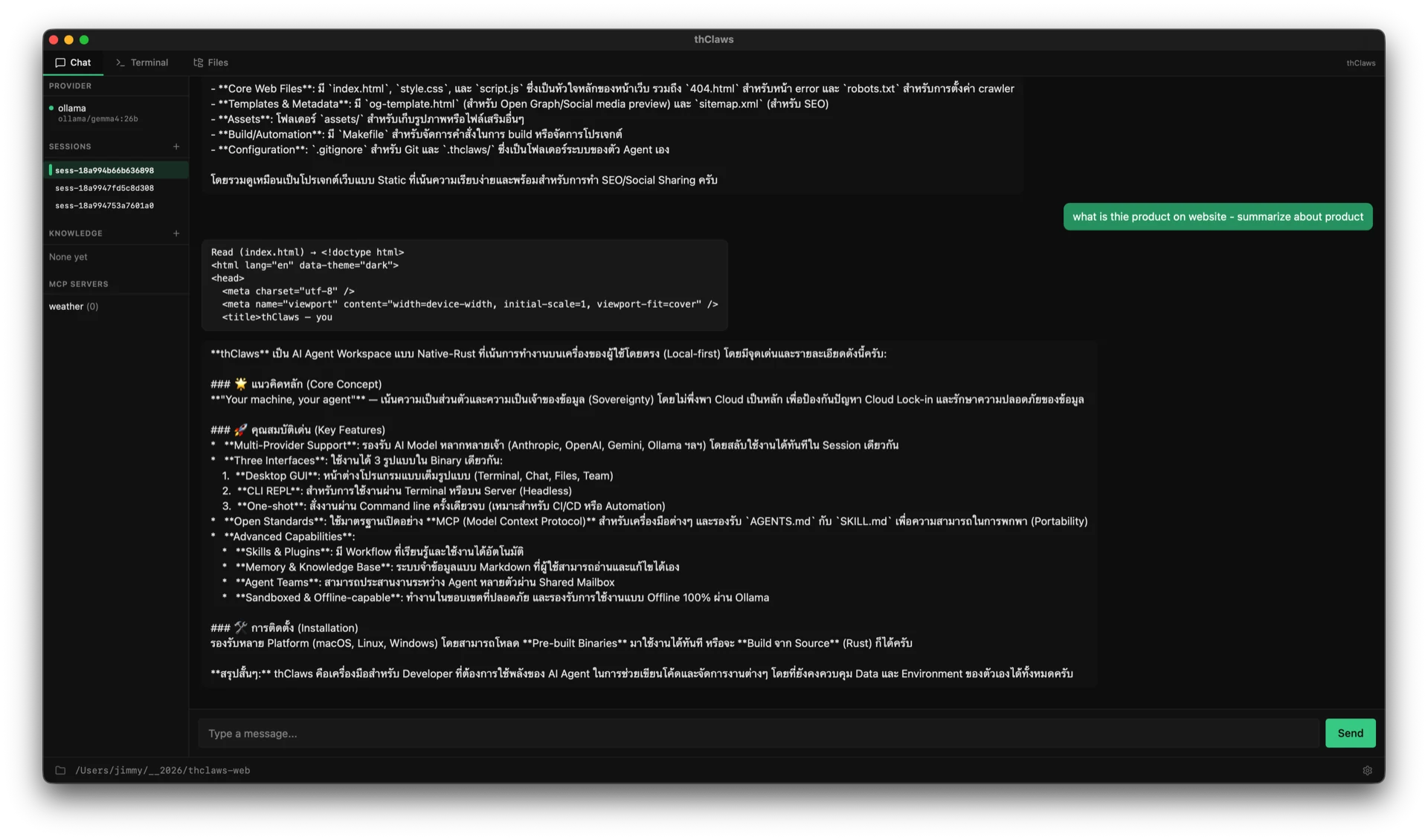

- Chat — for the lawyer, the analyst, the marketer, the PM. Conversational, friendly, no

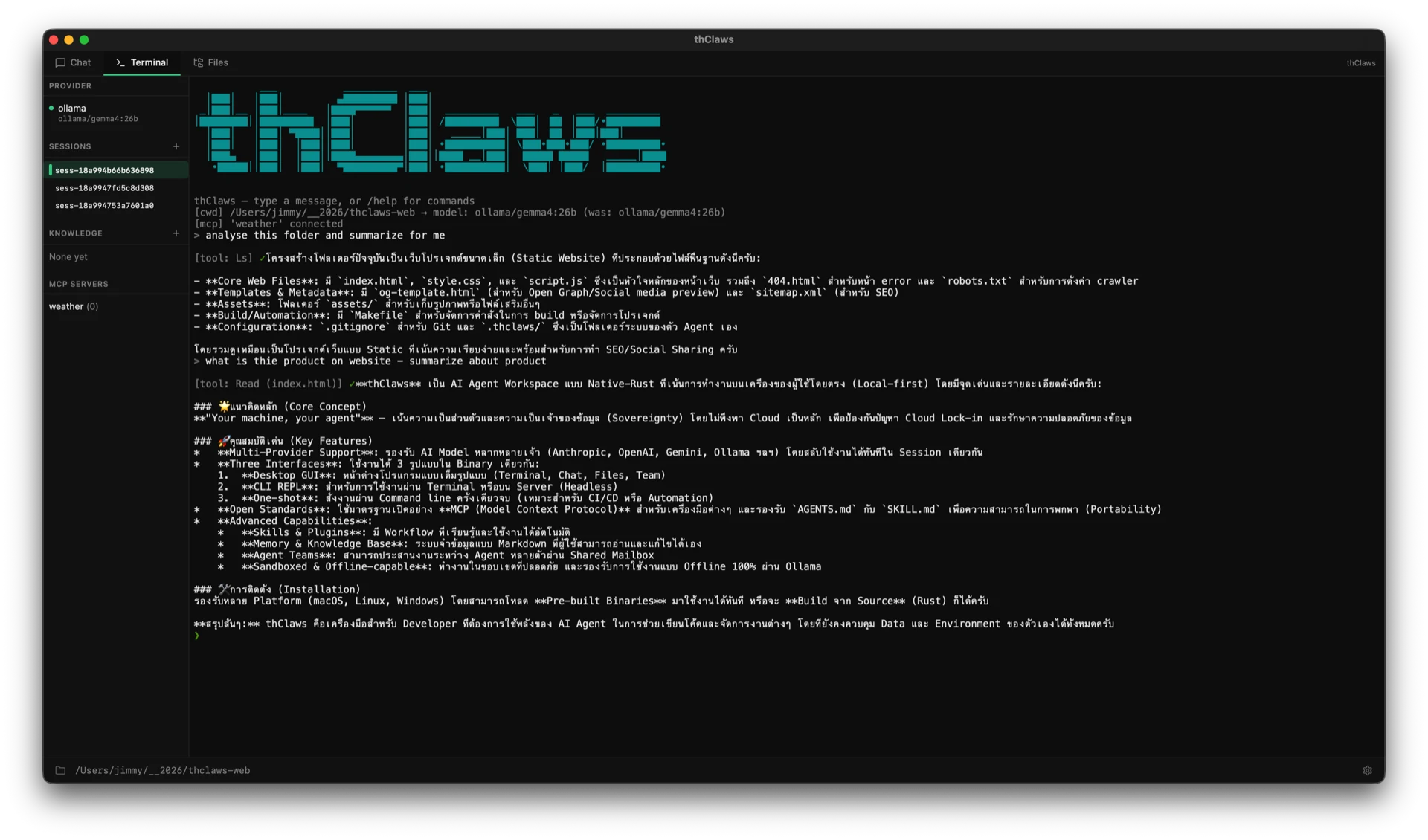

$prompt to learn. - Terminal — for the engineer who lives in tmux. Up-arrow history, slash commands, raw tool output, every keystroke earning its keep.

- Files — preview, edit, browse. CodeMirror for source, TipTap for markdown, full diff view when the agent has touched something.

- Team (extra mode) — multiple thClaws agents coordinating through a shared mailbox and task queue, each in its own git worktree. Backend in one window, frontend in another, the lead merging when both are done.

All four share the same provider stack (Anthropic, OpenAI, Gemini, Alibaba DashScope, OpenRouter, Ollama, Agentic Press), the same skills, the same MCP servers, the same sessions on disk. Switch surfaces, your conversation comes with you.

Files tab — preview, edit, and browse the project. CodeMirror for source, TipTap for markdown.

Files tab — preview, edit, and browse the project. CodeMirror for source, TipTap for markdown.

Terminal tab — REPL with full slash-command coverage and Up-arrow prompt history.

Terminal tab — REPL with full slash-command coverage and Up-arrow prompt history.

Chat tab — conversational surface for non-developers. Same engine, same session.

Chat tab — conversational surface for non-developers. Same engine, same session.

What we shipped today (v0.3.1) lives at thclaws.ai, source at github.com/thClaws/thClaws. Apache-2.0 / MIT dual-licensed. Pre-built binaries for macOS (ARM + Intel), Linux (x86_64 + aarch64), Windows (x86_64 + ARM64). One download, one double-click, the agent runs.

Why we built this

Building thClaws taught us something we didn’t expect at the outset: harnessing is its own discipline. It’s not “infrastructure for AI” — it’s a craft, with its own design space, its own failure modes, its own aesthetic. We learned that:

- The hardest decision in an agent loop isn’t what tool to call — it’s when to stop. Compaction policy, sub-agent boundaries, the exact moment the loop yields back to the user. Most of the harness is about endings, not actions.

- Provider abstraction isn’t free. Eleven providers means twenty-two converters and a normalizer in the middle, and every new feature in any one provider tests whether your abstractions hold. We rewrote the message-block enum three times. The fourth time held.

- Sandboxes only work if they’re load-bearing. The moment a permission gate is a checkbox the user clicks “Always allow” on, it’s over. We invest a lot in framing approval requests so they make the right answer obvious.

- A GUI for an agent is not a GUI for chat. It’s a GUI for delegation — a way to watch what someone (something) else is doing on your behalf, with the option to interrupt. Most of our UI design effort goes into observability, not interaction.

These are insights you can only earn by building. We earned them by building thClaws — and we’ll be unpacking them across the posts that follow this one.

Open source, on purpose

We believe agent infrastructure should be a public good — like HTTP, like Linux, like POSIX. If the layer that becomes the runtime for the next decade of software is controlled by a single vendor, we’ll see consolidation worse than anything browser monocultures or app-store gatekeeping ever produced.

So thClaws is open source. ThaiGPT Co., Ltd. holds the copyright; the community holds the keys to its evolution. The repo ships with full CONTRIBUTING / CODE_OF_CONDUCT / SECURITY policies because we mean it — this is meant to grow.

The harness pattern doesn’t care what domain it serves. The same scaffolding that runs a coding agent runs a contract-drafting agent runs a research agent runs a music-production agent. The differences live in the skills, the system prompt, the toolset. The kernel — the harness — wants to be shared.

That’s the future we’re building toward. Not “an agent for Thai developers.” Not “another Claude Code clone.” A foundation that can be extended into any kind of AI application — coding, drafting, research, design, ops, education — with the harness doing the load-bearing work and the model dispatching to whatever lives on top.

What’s next

v0.3.1 is the first release we feel comfortable calling stable. Ahead of us:

- Deeper Agent Teams — richer mailbox semantics, role-based permissions, recovery patterns when teammates wedge.

- A skills & plugins registry — so domain expertise from the community can be packaged and shared without friction.

- Mobile companion — read-only view of agent sessions on your phone, with push notifications for approval requests on long-running runs.

- Headless deploy — long-running agent processes via Agentic Press hosting, scheduled and webhook-triggered.

If any of that resonates — if you’ve felt the gap between “chatbot” and “agent that actually does work” — we’d love your help building it.

Get started

- Download v0.3.1 — six binaries, ~one minute to install.

- Source on GitHub — star, fork, file an issue.

- Contribute — the harness pattern is bigger than any one team.

The model isn’t the product. The harness is. And the harness, finally, is yours.

— Jimmy